Getting CLI authentication right: the complete guide to all 4 methods

The 4 CLI auth methods that matter, how GitHub, AWS, and AI tools implement them, and the security mistakes you'll want to avoid.

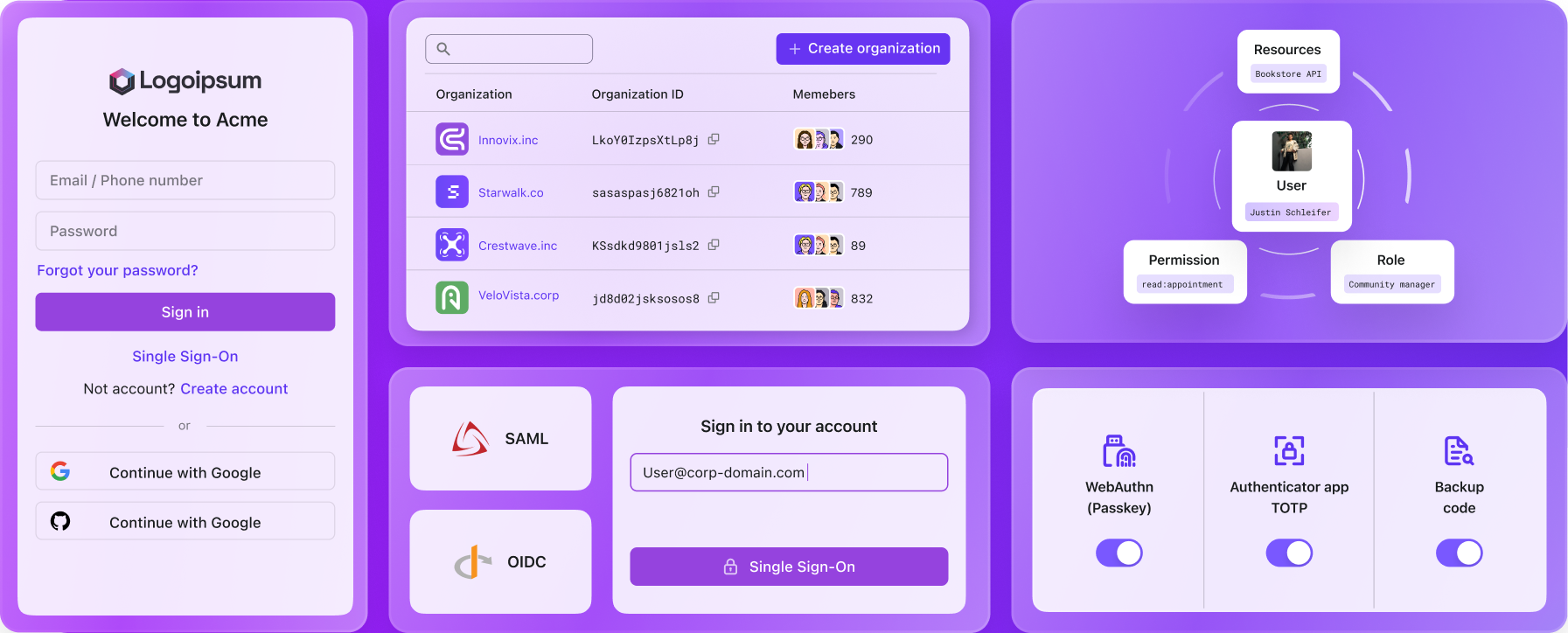

Every developer CLI ships with login as its first command. And every one of them solves auth differently.

GitHub shows you a code and opens your browser to verify it. AWS opens a browser for PKCE-based SSO. Stripe has you confirm a pairing code in the dashboard. The newer AI tools (Claude Code, OpenAI Codex CLI, Cursor) each picked their own approach too.

If you're building a CLI, auth is one of the first things you need to figure out. Pick the wrong method and you'll hear about it: frustrated users, security audits, or both. And with the recent wave of AI coding agents that call CLI tools programmatically, the stakes are higher: you're not just authenticating a human anymore. You might be handing credentials to an autonomous process.

Here are the four auth methods that matter, how the biggest tools implement them, and the mistakes you'll want to avoid.

The four methods at a glance

Before going deep, here's the quick comparison:

| Method | Best for | Security | Needs browser? |

|---|---|---|---|

| OAuth Device Code Flow | Headless environments, SSH | High | No (on the same machine) |

| Browser-based OAuth (localhost redirect) | Local development | Highest | Yes |

| API Keys / PATs | Automation, CI/CD, quick prototyping | Moderate | No |

| Client Credentials | Machine-to-machine, services | High | No |

Each has tradeoffs. Here's what you need to know about each one.

1. OAuth device code flow (RFC 8628)

This is the one where your CLI shows you a code like ABCD-1234 and a URL, then tells you to open that URL on any device and enter the code.

Who uses it: GitHub CLI (default), Azure CLI (via --use-device-code), Vercel CLI (recently switched to this as default), OpenAI Codex CLI (as a beta option)

How it works

- You run

cli login - The CLI requests a device code from the auth server, sending its

client_idand requested scopes - The server returns three things: a

device_code(internal identifier), auser_code(the short code you type), and averification_uri(where to go) - Your CLI displays the code and URL, then starts polling the auth server every 5+ seconds

- You open the URL on any device (phone, laptop, a different computer), type in the code, and authenticate however you want (password, SSO, passkeys, MFA)

- Once you approve, the next poll returns an access token and refresh token

- The CLI stores these and you're in

Why developers like it

The big selling point: it works anywhere. SSH session into a remote server? Works. Running inside a Docker container? Works. Cloud IDE with no local browser? Works. The browser doesn't need to be on the same machine as the CLI.

It also supports the full range of enterprise auth (SAML, OIDC, MFA) because all of that happens in the browser, not in the terminal. The CLI never sees your password.

The security catch most people miss

Device code flow has a phishing problem. An attacker can initiate a device code request, get a legitimate user code, and trick you into entering it, effectively authorizing the attacker's session. This isn't theoretical. Security researchers have documented this attack against AWS SSO device code auth.

This is a big enough concern that AWS changed their default. Starting with AWS CLI v2.22.0, the default for aws sso login switched from device code flow to PKCE-based authorization code flow. Device code is still available via --use-device-code, but it's no longer the default path.

Meanwhile, Microsoft's own tenant has started blocking device code flow entirely via conditional access policies, a strong signal that they consider it a high-risk authentication method.

So we have an interesting split: Vercel adopted device code flow as their default in September 2025, while AWS moved away from it. The pattern seems to be that device code flow is great for environments where you genuinely can't open a browser, but if you can open one locally, PKCE is the safer bet.

On the auth provider side, the demand is clearly growing. Logto just shipped OAuth 2.0 Device Authorization Grant support for native apps in v1.38.0 (open source) and Logto Cloud, so you can now enable device flow as the authorization method for any native application. This matters if you're building a CLI. Implementing RFC 8628 correctly (code expiry, rate limiting, polling logic, the sign-in UX on the verification page) is more work than most teams expect, and having your auth provider handle it means you just need to make the right HTTP calls.

Technical details from the RFC

A few things worth knowing from RFC 8628:

- The

expires_invalue for device codes is set by the auth server. The RFC uses 1800 seconds (30 minutes) as an example, but it's not a fixed requirement. - If the server doesn't specify a polling

interval, clients must default to 5 seconds. - If you get a

slow_downerror, add 5 seconds to your interval. - Device codes should be single-use and expire quickly.

- All token exchanges must happen over HTTPS.

2. Browser-based OAuth (localhost redirect)

This is the most common method for CLIs running on a developer's local machine. You run login, your browser opens, you authenticate, and the browser redirects back to a local server the CLI spun up. Modern implementations add PKCE (pronounced "pixie") on top, which makes the flow much harder to exploit.

Who uses it: Claude Code, gcloud CLI, Terraform CLI, AWS CLI v2.22+ (for SSO, with PKCE as default)

How it works

- You run

cli login - The CLI starts a temporary HTTP server on a random local port (say

http://127.0.0.1:8742) - It opens your default browser to the auth provider's authorization endpoint, passing that localhost URL as the redirect URI

- You authenticate in the browser (SSO, password, passkeys, whatever the provider supports)

- The auth provider redirects your browser to

http://127.0.0.1:8742/callback?code=XXXX&state=YYYY - The local server captures the authorization code, exchanges it for tokens via a back-channel HTTPS request

- The browser shows a "Success! You can close this tab" page

- The CLI stores the tokens and shuts down the local server

The user experience is smooth. No codes to copy, no URLs to type. Just a browser tab that opens and closes.

When it falls apart

This only works when the CLI can open a browser and bind to localhost. That rules out:

- SSH sessions to remote servers

- Docker containers (unless you do port forwarding gymnastics)

- CI/CD pipelines

- Headless servers

- Some restricted corporate environments

That's why most tools that default to browser OAuth also support a fallback, typically device code flow or API keys.

Three security pitfalls that keep showing up

Pitfall 1: Binding to 0.0.0.0 instead of 127.0.0.1

This is the most common mistake, and it's severe. If your callback server listens on all interfaces, anyone on the same network can intercept the authorization code.

I've seen this in production CLIs. It's an easy mistake to make because many HTTP server libraries default to 0.0.0.0.

Pitfall 2: Missing state parameter validation

The state parameter is your CSRF protection. Without it, an attacker can trick your CLI into accepting an authorization code from a malicious session.

Pitfall 3: Not using PKCE

If your OAuth flow doesn't use PKCE (Proof Key for Code Exchange), the authorization code can be intercepted and replayed.

In a standard authorization code flow, if an attacker intercepts the authorization code (through the network, or by reading the redirect URL), they can exchange it for tokens. PKCE prevents this by requiring proof that the token exchange was initiated by the same client that started the authorization request.

Here's what PKCE adds to the flow:

- Before starting the flow, the CLI generates a random

code_verifier(a high-entropy string) - It creates a

code_challengeby hashing the verifier with SHA-256 - The challenge is sent with the initial authorization request

- When exchanging the code for tokens, the CLI sends the original

code_verifier - The auth server verifies that the verifier matches the challenge

An attacker who intercepts the authorization code doesn't have the code_verifier, so they can't complete the exchange.

This is why AWS CLI v2.22+ made PKCE the default for SSO, moving away from device code flow. When the CLI can open a browser on the same machine, browser OAuth with PKCE is strictly better than device code flow — same UX, stronger security guarantees, no phishing vector. Device code flow is still the right choice when the browser can't be on the same machine (SSH, containers, remote dev environments), but that's not the common case for local development.

3. API keys and personal access tokens

The simplest approach. You generate a token in a web dashboard, paste it into your CLI config or an environment variable, and you're done.

Who uses it: Stripe CLI (as a login option), npm, pip, most AI coding tools as a fallback (Claude Code via ANTHROPIC_API_KEY, OpenAI tools via OPENAI_API_KEY, Aider)

How it works

- Log into the service's web dashboard

- Go to settings → API keys (or personal access tokens, or developer tokens)

- Generate a new key, usually a long random string with a prefix (e.g.,

sk_live_,ghp_,npm_) - Store it in a config file (

~/.config/stripe/config.toml,~/.aws/credentials) or an environment variable

The CLI reads it on startup and includes it in API requests, typically as a Bearer token in the Authorization header.

Why it's still popular despite the risks

For automation, API keys are hard to beat. They work in CI/CD, in containers, in scripts, in cron jobs. Anywhere that can read an environment variable. No browser needed. No interactive prompts. No token refresh dance.

For AI agent workflows especially, API keys are the path of least resistance. When Claude Code or Cursor needs to call an API, an environment variable with an API key is the simplest integration point.

The risks are real

- Leaks. API keys end up in git commits, log files, error messages, and CI output. GitHub scans for exposed tokens and reports over a million leaked secrets per year.

- Overprivileged. Most API keys grant broad access. If leaked, the blast radius is large.

- No MFA. API keys bypass your carefully configured multi-factor auth.

- Hard to rotate. Every time you rotate a key, you need to update it everywhere it's stored. With teams, that's a coordination problem.

Modern enhancement: temporary token exchange

The smart move when using API keys: exchange them for short-lived tokens at runtime.

AWS pioneered this with STS (Security Token Service). Your long-lived credentials are only used to request temporary credentials that expire in an hour. Tools like aws-vault automate this entirely.

Even if you start with API keys, consider adding this pattern. It limits the damage window of a compromised key from "until someone notices" to "one hour."

4. Client credentials flow

This OAuth 2.0 flow is designed for machine-to-machine auth: services talking to services, no human involved.

Used in: CI/CD pipelines, background services, automated tooling

How it works

The service sends its client_id and client_secret directly to the auth server and gets back a short-lived access token. No browser, no user interaction, no redirect.

When to use it

Use client credentials when:

- A service or bot needs to authenticate, not a human

- You're in a CI/CD pipeline

- You need automated, unattended access

- The "user" is the application itself

Don't use it for human authentication. It doesn't support MFA, SSO, or any interactive verification.

What real CLIs actually use

Here's a corrected breakdown based on current documentation and source code. A lot of articles get this wrong because tools keep changing their defaults.

| CLI Tool | Default auth | Fallback options | Token storage |

|---|---|---|---|

GitHub CLI (gh) | Device code flow via browser | PAT (--with-token), env var (GH_TOKEN) | OS keychain (fallback: plain text file) |

| AWS CLI v2 | PKCE auth code flow (SSO) | Device code (--use-device-code), credential files | ~/.aws/sso/cache/ |

Azure CLI (az) | WAM on Windows; browser auth code flow on Linux/macOS | Device code (--use-device-code) | ~/.azure/msal_token_cache.* |

| Vercel CLI | Device code flow (new default, Sep 2025) | API token (--token, env var) | ~/.local/share/com.vercel.cli/auth.json |

| Stripe CLI | Browser-based pairing flow | API key (--interactive, --api-key, or env var) | ~/.config/stripe/config.toml |

| gcloud CLI | Browser OAuth | --no-browser manual flow | ~/.config/gcloud/ |

| Claude Code | Browser OAuth | API key (env var, apiKeyHelper) | OS keychain / ~/.claude/.credentials.json |

| OpenAI Codex CLI | Browser OAuth | Device code (beta), API key | ~/.codex/auth.json / OS keyring |

| Terraform CLI | Browser OAuth | Token paste | ~/.terraform.d/credentials.tfrc.json |

The trend is clear: browser-based OAuth is the default for local development, with device code flow as the headless fallback, and API keys for automation. PKCE is gaining ground as the most secure option when a browser is available.

Token storage: what to use, what to avoid

Getting auth right doesn't matter if you store the tokens wrong.

The right way: OS keychains

Every major OS has a built-in encrypted credential store:

- macOS: Keychain (used by GitHub CLI, Claude Code)

- Windows: Credential Manager

- Linux: Secret Service API (GNOME Keyring, KDE Wallet)

These provide OS-level encryption, access control, and hardware security integration. Your CLI doesn't need to implement its own crypto.

The fallback: encrypted config files

When a keychain isn't available (containers, minimal Linux installs), use encrypted config files with restrictive permissions:

What to avoid

Plaintext files. This should be obvious, but plenty of tools still do it. A plaintext token file is accessible to every process running as your user, every backup tool, and anyone who gets temporary access to your machine.

Environment variables for long-term storage. Environment variables are visible in process listings, often logged by crash reporters, and inherited by child processes. They're fine for CI/CD (where the CI platform manages the secret) but risky for local development.

Browser localStorage. If your CLI has a web component, don't store tokens in localStorage. One XSS vulnerability exposes everything.

Token lifecycle management

Access tokens

Keep them short-lived. One hour is the common standard. When an access token expires, the CLI should transparently refresh it. The user shouldn't have to re-login for routine operations.

Refresh tokens

Refresh tokens are the long-lived credentials that let you get new access tokens without re-authenticating. They're high-value targets for attackers because of their longer lifespan (days to months).

Refresh token rotation

Modern auth systems rotate refresh tokens on every use:

- CLI sends refresh token to get a new access token

- Auth server returns a new access token and a new refresh token

- The old refresh token is immediately invalidated

- CLI stores both new tokens

This limits the damage of a stolen refresh token. If an attacker tries to use a refresh token that the legitimate CLI already used, the auth server detects the reuse and invalidates the entire token family. Both the attacker's stolen token and the legitimate user's current token stop working, forcing re-authentication but preventing the attacker from maintaining access.

Common pitfalls (with code)

1. Binding the callback server to all interfaces

Already covered above, but worth repeating: always bind to 127.0.0.1, never 0.0.0.0.

2. Logging tokens

This happens more often than anyone admits. A debug log statement, an error handler that dumps the request headers, a verbose mode that prints everything.

3. Baking credentials into container images

Docker images are not secret stores. Every layer is extractable.

4. Not handling token expiry gracefully

When a token expires mid-operation, don't just crash with a 401 error. Attempt a refresh, and only prompt for re-authentication if the refresh token is also expired.

5. Ignoring the principle of least privilege

Don't request admin:* scope when you only need repo:read. This applies to both OAuth scopes and API key permissions.

When an AI agent is using your CLI, this becomes even more important. You probably don't want an AI assistant to have delete-repo permissions just because it needs to read code.

The AI agent wrinkle

Here's what makes 2026 different from 2023: CLIs aren't just for humans typing commands anymore. AI coding agents like Claude Code, Codex, and Cursor's agent mode call CLI tools programmatically. This creates new auth challenges:

Delegated permissions. When Claude Code runs gh pr create on your behalf, it's using your GitHub credentials. But should an AI agent have the same permissions as you? The principle of least privilege says no, but most tools don't have a way to scope down agent access.

Credential exposure. If your API key is in an environment variable, every process can read it, including the AI agent's subprocess. Tools like Claude Code have addressed this with apiKeyHelper scripts that generate short-lived tokens on demand, but this isn't universal yet.

Headless auth for agents. An AI agent running in a sandboxed environment can't open a browser. Device code flow works here (the human approves on a separate device), but API keys are the more common solution because they require zero interaction.

Audit trails. When an AI agent makes API calls with your credentials, the audit log shows you made those calls. There's currently no standard way to distinguish "the human did this" from "the agent did this on behalf of the human."

This is an evolving space. The best thing you can do right now:

- Use scoped tokens with minimal permissions for agent workflows

- Prefer short-lived credentials (temporary tokens, not long-lived API keys)

- Use dedicated credentials for agent use, separate from your personal credentials

- Monitor API usage for unexpected patterns

Decision framework

Picking an auth method? Here's the short version:

"My users are developers on their local machines" → Browser-based OAuth with PKCE (best security + smooth UX)

"My CLI needs to work in SSH sessions and containers" → Device code flow as fallback, browser OAuth as primary

"This runs in CI/CD with no human in the loop" → Client credentials flow or scoped API keys

"I need the fastest implementation possible" → API keys (but add token rotation later)

"Enterprise customers need SSO and MFA" → Any OAuth flow (device code or browser), which supports the full enterprise auth stack

"AI agents will use this CLI" → Support API keys (for easy agent integration) + browser OAuth (for human users), with scoped permissions and short-lived tokens

FAQ

Is device code flow secure?

It's secure against most attacks, but it has a known phishing vulnerability. An attacker can generate a device code and trick users into authorizing it. This is why AWS moved to PKCE as the default for SSO login. For headless environments where PKCE isn't practical, device code flow is still the best option. Just be aware of the phishing risk.

Should I store tokens in environment variables?

For CI/CD: yes, because the CI platform encrypts them at rest and injects them at runtime. For local development: no. Use the OS keychain instead. Environment variables are visible in process listings and can be accidentally logged or leaked to child processes.

What's the difference between an API key and a personal access token?

Functionally, very little. Both are long-lived credentials that authenticate API requests. The distinction is mostly organizational: API keys are often scoped to a project or application, while personal access tokens are tied to a user account. Some services use the terms interchangeably.

How often should I rotate credentials?

Access tokens: every hour or less (handled automatically by the token refresh flow). Refresh tokens: rotate on every use (the auth server should do this). API keys: at least every 90 days, or immediately if you suspect exposure. In practice, most teams only rotate API keys after an incident, which is too late.

How do I handle auth in Docker containers?

Three options, in order of preference:

- Device code flow for interactive use (works because the browser can be on a different machine)

- Environment variables passed at runtime (

docker run -e API_KEY=${API_KEY}) for CI/CD - Volume-mounted credentials (

docker run -v ~/.config/tool:/root/.config/tool:ro) for local development

Never bake credentials into the image. Never.

What about MCP (Model Context Protocol) authentication?

MCP is the emerging standard for AI agents to connect with external tools and services. It introduces a new auth dimension: the agent needs credentials to talk to the MCP server, which in turn needs credentials to talk to downstream APIs. This is still being standardized. For now, most MCP implementations use API keys or OAuth tokens passed through the MCP configuration. Expect this to evolve rapidly.

CLI auth is changing fast. What was best practice two years ago (device code flow as the default, plaintext credential files) is already being replaced. If you're adding auth to a CLI today, start with browser OAuth + PKCE for humans, API keys for automation, and plan for the day when AI agents are your primary users.